By: David Weatherall

Send to a friend

The details you provide on this page will not be used to send unsolicited email, and will not be sold to a 3rd party. See privacy policy.

Five years ago, a report by the World Health Organization expressed fears that medical advances stemming from the Human Genome Project might widen the gap in levels of healthcare between rich and poor countries.

In particular, the group that prepared the report, of which I was the lead author, warned that techniques and treatments resulting from the sequencing of the human genome could accentuate the so-called ’10/90 gap’. This term refers to the fact that less than 10 per cent of global spending on health research is devoted to diseases that affect 90 per cent of the world.

The report, titled Genomics and World Health, accepted that richer countries could be expected — primarily for reasons of self-interest — to apply genomic technology to the development of both new treatments and vaccines for some of the world’s major killers, such as HIV/AIDS and tuberculosis.

It also warned both that the fruits of genomics research might turn out to be too expensive for use in developing countries, and that many of their other major health problems would be ignored.

Nevertheless, the report recommended the introduction of DNA technology into developing countries, suggesting that the new techniques should be directed primarily both at the early diagnosis of communicable diseases and at the control of the genetic anaemias that are a major health problem for many African and Asian countries.

Personalised medicine

|

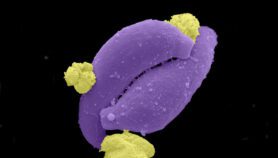

| Pharmacogenetics proposes to tailor medicines to individuals and their genes |

| Photo Credit: (IRD/Deliry Antheaume) |

These complex issues have recently been revisited in a report by the Royal Society, Personalised Medicines: Hopes and Realities.

The committee (that I also chaired) that prepared the latest report examined the current and future role of pharmacogenetics — the study of how people’s genetic makeup determines their response to drugs — both in the discovery and development of new drugs, and in providing safer and more personalised treatment to patients.

In general, we supported the idea that a genetic approach to drug development offers major advantages. But we were much more cautious about the application of pharmacogenetics to improving healthcare.

We accepted that there seems to be clear evidence — not least from cancer research — that the analysis of common diseases at the molecular level, and the subsequent identification of separate causal entities, is likely to lead to major therapeutic advances.

But we were more cautious about the potential value of community-wide genetic testing as an approach to improving the efficacy of drugs and avoiding their side-effects.

In particular, we pointed out a number of potential problems, for example, the fact that the way the body handles many drugs is controlled by several different genes, each making a small contribution. We also pointed out that single gene polymorphisms with a large effect on the way drugs are processed in the body seem to be rare.

Even identifying an individual’s genetic make-up as indicating improved efficacy — or increased side-effects — could be extremely difficult, not least because many older patients might take several drugs simultaneously, and the body’s handling of drugs appears to change with increasing age.

Furthermore, doctors need to be convinced that genetic testing is more effective than careful monitoring of a patient’s response to a particular drug. And finally, it is unclear who will give the appropriate advice in situations where such testing is applied in the clinic; should it be the hospital, the primary-care doctor, the pharmacist, or someone different from all of these?

For all these reasons, we felt it was essential that each drug was tested individually, and that large community studies, backed up by detailed cost-benefit analyses, should be undertaken to compare the effectiveness of genetic testing with standard monitoring.

A few studies of this type are underway in the United Kingdom. But there is virtually no information available on these critical issues.

Developing countries

Our second report also revisited the issue of the potential value of pharmacogenetics to developing countries.

Pilot studies have already suggested that better understanding of the genetic variation in response to drugs to treat HIV/AIDS could avoid wasting valuable, and often costly drugs when treating individuals whose genetic makeup means they are unlikely to respond well to them.

Similarly, the early identification of drug resistance of malarial parasites by DNA analysis has proven to feasible in practice, although whether it is cheap and effective enough to replace existing approaches will require further analyses.

About 400 million of the world’s population lack a particular red blood cell enzyme, which means them would be likely to develop severe anaemia if they took the anti-malarial drug primaquine.

The problem is that primaquine is the only drug available for the treatment of Plasmodium vivax malaria that affects hundreds of thousands of children worldwide; because the P. vivax parasites have developed a level of resistance, the dose of primaquine has had to be increased.

A simple and cheap test for this form of enzyme deficiency is urgently needed. And recent studies have confirmed that inherited factors — particularly in those who carry genes for red bloodcell disorders such as alpha thalassaemia and sickle-cell anaemia — provide a high level of protection against the serious complications of malaria. Such knowledge may be increasingly important when testing anti-malarial drugs or vaccines in the field.

There are many other examples of the potential value of pharmacogenetics for developing countries. But, just as in richer countries, each of these possibilities will have to be tested by large-scale studies, with detailed cost-benefit analyses, before we can say with any confidence whether they are an improvement on more traditional techniques.

Link to World Health Organisation report, Genomics and World Health ![]()

![]()

![]()

Link to Royal Society report, Personalised Medicines: Hopes and Realities