By: Crispin Maslog

Send to a friend

The details you provide on this page will not be used to send unsolicited email, and will not be sold to a 3rd party. See privacy policy.

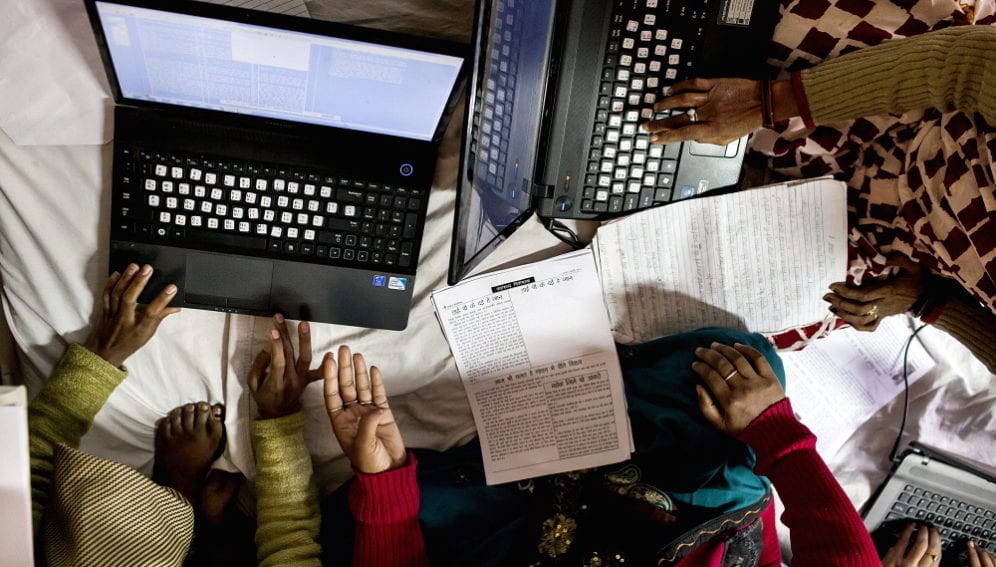

As we navigate the digital age, we are increasingly challenged by information overload. We now have more data at our fingertips, but are we any wiser? How does society handle so much knowledge? How can people use it?

This has given rise to the big data phenomenon, which refers simply to a set of data that are too huge, so large and complex that traditional data processing applications are inadequate. Big data has given rise to a new field of data science and practitioners called data scientists. [1]

What these scientists do is to make sense of the data. The work they do refers to the use of predictive analytics or other certain advanced methods to extract value from data and present them in understandable forms.

Accurate data are needed for more confident decision-making by governments, businesses, science institutions, and policymakers. And better decisions mean greater efficiency, reduced cost, minimised risk and positive results.

But big data cannot be grasped easily. It has to be packaged in small parcels in some logical order and visualised. Enter the new field of data visualisation. This refers to the techniques used to communicate big data by encoding it as visual objects contained in graphics. The purpose is to communicate information clearly and effectively.

“Big data can be a boon or a bane. We can let the statistics overwhelm us, or we can visualise and use them to improve our life.”

By Crispin Maslog

Data visualisation tools are not just the usual charts and graphs used in Excel spreadsheets. They display data in more sophisticated ways such as infographics, dials and gauges, geographic maps, spark lines, heat maps, and detailed bars, pie and fever charts.

The images may also be interactive, allowing users to manipulate them for querying and analysis. Most software vendors embed data visualisation tools into their products.

Big data on fish and rice

An early example of big data is FishBase, the largest and most extensively accessed online database on adult finfish on the web. The site is owned and managed by a consortium of universities, fishery centres and museums, as well as the FAO (UN Food and Agriculture Organization).

FishBase provides comprehensive species data, including information on taxonomy, geographical distribution, biometrics and morphology, behaviour and habitats, ecology and population dynamics as well as reproductive, metabolic and genetic data. It has links to information in other databases. [2]

Official FishBase information sheet indicated that their database included descriptions of 33,000 freshwater and marine species and subspecies with information on 304,500 common names in some 300 languages, distribution ecology, taxonomy, population dynamics (growth life, length-width, etc.) and actual photos, and references to 51,600 works in the scientific literature. The site has about 800,000 unique visitors per month.

Such an impressive database can be useful in research and teaching, decision-making and management of aquatic biodiversity, and in monitoring how climate change affects species richness. Data is provided by over 2,000 collaborators all over the world. In fact, because of demand for database covering other aquatic lifeforms other than fish, a spinoff was created in 2006 called SeaLifeBase.

Another more recent example of big data is the 3,000 Rice Genomes Project (3K RGP) launched this September by three rice research institutions — the Philippine-based International Rice Research Institute (IRRI), the Chinese Academy of Agricultural Sciences and the Beijing Genomics Institute. The program has sequenced 3,024 rice varieties from 89 countries.

“This massive dataset is a powerful resource for understanding natural genetic variation in rice as well as for large-scale discovery of new genes associated with economically important traits,” says Kenneth McNally, senior scientist at IRRI’s T.T. Chang Genetic Resources Center. [2]

McNally adds: “The 3K RGP will speed up tremendously the pace of developing improved rice varieties to feed a growing population, estimated to reach more than 9.6 billion by 2050, with half of humanity eating rice.” [2]

At the launching of the genome project, IRRI bioinformatics specialist Nickolai Alexandrov explained that data on 3,000 rice genomes can help scientists discover new locus-trait associations, find causative genome variations and introduce new varieties to breeding programs.

The IRRI-based international rice gene bank stores more than 127,000 rice varieties and accessions from all over the world.

Up to this point, Alexandrov tells SciDev.Net, access to the wealth of information about the hundred thousand plus rice varieties had been inadequate because in the old system, running “SNP-calling pipeline at IRRI’s server took about six hours for one genome, and 18,000 hours (750 days) for 3,000 genomes”.

The new 3K RGP data analysis set is massive at 120 terabytes, which is well beyond the computing capacities of most research institutions.

Big data visualisation

The 3K RGP will thus make this gold mine of rice varieties easily accessible to scientists from all over the world. Rice breeders will now be able to mine the big data at IRRI for rice traits that include higher nutritional quality, tolerance of pests, diseases, environmental stresses such as flood and drought, and reduced greenhouse gas emissions.

“The great thing about the release of this dataset is that it is immediately useable,” says McNally. “It comes with tools to help researchers visualise and analyse genetic information.” The dataset is now available online, as an Amazon Web Services public data set. Accessing the data is free.

Big data can be a boon or a bane. We can let the statistics overwhelm us, or we can visualise and use them to improve our life — inform our policies, plan our cities, improve our yields from the earth and seas, conquer sickness, mitigate disasters and predict the future.

Crispin Maslog is a former journalist and science journalism professor at the University of the Philippines Los Baños and director of the Silliman School of Journalism, Philippines. He is a consultant of the Asian Institute of Journalism and Communication and board chairperson of the Asian Media Information and Communication Centre, both based in Manila.

This article has been produced by SciDev.Net's South-East Asia & Pacific desk.

References

[1] Wikipedia Big data

[2] International Rice Research Institute Big data on 3,000 rice genomes available on the AWS Cloud (IRRI, accessed 22 Sept. 2015)